AI 2025-11-25

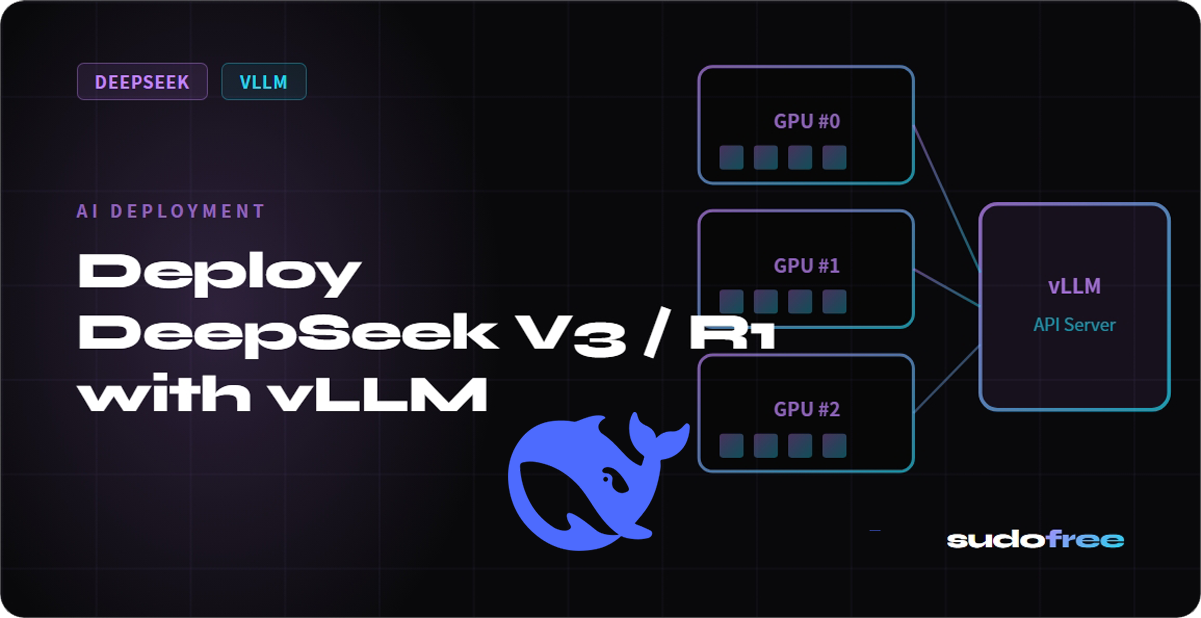

Deploy DeepSeek V3 / R1 with vLLM

Requirements

DeepSeek V3 (671B MoE) requires a multi-GPU cluster. For testing, use DeepSeek-R1 distill models.

Install vLLM

pip install vllmRun

python -m vllm.entrypoints.openai.api_server \

--model deepseek-ai/DeepSeek-R1-Distill-Qwen-7B \

--port 8000 \

--max-model-len 8192OpenAI-Compatible API

curl http://localhost:8000/v1/chat/completions \

-H 'Content-Type: application/json' \

-d '{"model":"deepseek-ai/DeepSeek-R1-Distill-Qwen-7B","messages":[{"role":"user","content":"Hello!"}]}'

#Sudofree#DeepSeek V3 / R1